Abstract

We organized ten virtual conferences between February and October of 2021. These conferences were very well received, and at each conference, there were participants mentioning that it was the best virtual conference they had ever attended. They asked us to share how we did this. We do not have a secret formula, but we tried to create conferences where participants were actively involved during the sessions. Instead of live presentations we combined pre-recorded presentations with live discussions among presenters. We present here the background of the conferences, how we implement the conferences and evaluate what worked and what did not work very well.

Context

In the spring of 2019, we proposed to hold the global biennial conference of the International Association for the Study of the Commons (IASC) in Arizona, USA, at the Tempe campus of Arizona State University in October 2021. Such a conference would get about 600 to 800 participants from all over the world for a week-long program including field trips. In early March of 2020, we were ready to distribute the call for abstracts. We had booked affordable conference facilities, created a website, and started planning the field trips. When COVID-19 shut down the world in mid-March 2020, it became rapidly clear we had to think about a plan B. We ended up with a series of 10 virtual conferences in 2021. In this post, I will share our experiences and lessons learned.

The 10 conferences had 100 to 300 registered participants each, with a total of 1120 unique participants. Our approach for organizing these conferences was meant for this size and may not scale up to much larger numbers.

Team

Although I am writing the blogpost, the conferences were organized and implemented by a large number of individuals. Caren Burgermeister, the executive director of the IASC and international coordinator of the Center for Behavior, Institutions and the Environment at Arizona State University, was instrumental in dealing with the Zoom coordination, calendar invites, handling emails and payments, coordinating the tech hosts of the live events, editing recordings of live panels and managing the outreach on social media. Luz-Andrea Pfister, from Pfister Lab, designed and implemented the websites of the conferences, and kept them up to date during the conferences. We had a number of volunteers who acted as tech hosts of live sessions, including Donald Potter, Manuela Vanegas Ferro, Dane Whittaker, and Alfredo Moreno. Each conference had one or more chairs who organized, with their steering committee, the content and moderation of events: Alejandro Garcia Lozano, Marty Anderies, Rimjhim Aggarwal, Elizabeth Baldwin, Edella Schlager, Melanie Dulong de Rosnay, Charlie Schweik, Mike Schoon and Tom Evans.

Past virtual conferences

For several years before the pandemic, I have been experimenting with virtual conferences. The reason for this is to provide low carbon options for conferences and increase the accessibility of conferences to those who don’t have the means to travel around the world to attend meetings. We did the first virtual conference of the IASC in Fall 2018 combined with a short video contest. We had about 60 videos and only IASC members were able to access the conference material. This requirement was motivated by our need to increase membership numbers to earn revenue to fund our secretariat. During a period of 3 weeks people could watch the videos and comment on them. The conference was a modest success. We increased membership numbers, but the level of interactions during the conference was limited.

For the summer of 2020, we had planned – before the pandemic happened – a second virtual conference, namely on African commons. We made a few changes compared to the first conference. We required speakers to be IASC members, and submitted talks were freely available for everyone to watch. However, only IASC members could comment on the videos (since they were logged into the system). We had a sponsor which allowed us to sponsor memberships of a significant number of participants. Again, we had a duration of about 3 weeks where people could comment on the videos, but we also had various live panels over the duration of the conference, including some networking events using breakout rooms in Zoom. We also allowed for contributions in French and English and used automatic translation from YouTube to provide subtitles. The conference was very successful with more than 2300 different participants during the conference.

Defining the scope of the virtual conferences

When we decided to go virtual for IASC 2021, we made a few important decisions. First, we did not want to have one big conference. We found that too risky not knowing about useful platforms that could scale up to a fruitful conference of hundreds of participants. We also saw this as an opportunity to experiment and decided to have a series of conferences during the year, where the first 9 were topical conferences, based on the original tracks of the conference, and the 10th and final conference focused on synthesis and membership meetings.

Based on previous experiences, we also decided to have short conferences of a few days each with a combination of pre-recorded videos, and live panels focused on discussions. We did not want to have lengthy live presentations. First, because many of our participants are from localities with limited bandwidth, the challenge of diverse time zones, and because we do not think people like to watch lengthy presentations (and it is difficult to keep presenters in check in a Zoom meeting). For those who wanted, presenters of pre-recorded talks could join a discussion panel based on common issues related to the videos. This would also stimulate presenters to watch each other’s talks. We also asked for panels where panelists debate on a specific topic.

We had no keynote presentations, something we had decided on for the original conference, to stimulate more debate and discussions, instead of one-direction monologues.

Since our organization needs revenue to fund the secretariat and the local organizers, Arizona State University, also needs funds to implement the conferences, we did not make the conference freely available. We needed to obtain about $25,000 to cover all direct costs and an additional $50,000 in renewals of membership fees to support the secretariat. We charged $10 per conference for IASC members, $50 for non-members, and organizations could act as sponsors. With the sponsorships, we could waive the registration fees for those who requested this when submitting their abstract, mainly participants from the global south and/or students. In the end, we made a surplus, which was donated to the IASC.

Tools we used

We used a number of different tools to implement the various parts of the conferences. We used these tools because we had already successfully used them, or our university has a site license. We could have used other tools but were eager to use tested widely applicable tools so that participants are likely to be familiar with them, and the software is available in many countries. Nations like China, India, Iran, Indonesia, etc., did not allow certain software or websites (such as payment systems, or Google-based tools in China) and we had to make adjustments.

The website was implemented in Word Press like the rest of the IASC websites using the plugin Elementor. The website was connected to WildApricot which manages our membership database and payments.

The videos were submitted by participants using WeTransfer and Smash and hosted as unlisted YouTube videos on our IASC YouTube channel. For 2 conferences with a lot of Chinese participation we also hosted the videos on OneDrive, since YouTube is not available in China.

We used Zoom for the live events, and Wonder.me as a platform to mingle around in a virtual space. Participants could sign up to a dedicated Slack workspace for each conference to have an alternative way of interacting, also after the conference. During the conferences, we created a joint music playlist on the conference theme using Spotify.

Although those tools all worked well, we expect to make changes in future conferences since new tools become available regularly, but we will only make adjustments after careful testing of alternatives.

Workflows

We now provide the main actions to implement the conference, their timing, and time commitment.

Call for Abstracts: This went out 6-9 months before the conference. The calls were crafted by the chairs and their steering board. It provided information about the types of submissions we would consider, and we had a website with information on costs, the creation of videos, and a form to submit abstracts.

We distributed the call via newsletters of the IASC, their social media channels, and via emails from chair and steering members. The due date for abstracts was typically around 5-7 weeks before the conference. About 90% of the abstracts were submitted on the due date or the few days before the due date. We did not immediately close the submission page but waited for a few days for late submitters.

Accepting abstracts and scheduling conference: After the due date of submissions, we created a spreadsheet of the various submissions. Since not everyone submits the proper information, we had to check with various submitters, and get all submissions in the right categories.

Once we had cleaned up the database, the chair and steering committee reviewed the abstracts. Since the abstracts are imperfect to evaluate quality, we only rejected proposed talks that did not fit into the scope of the conference. Typically, those were just a few per conference.

Each submitter received an email on the outcome of the review process four to five weeks before the conference. If accepted they received instructions on the next steps: the deadline for video submissions and the maximum length of the video, the link to practical information on how to create a video, and info whether they received a waiver if requested.

By that time, we also started to create a schedule for the conference. We tried to accommodate the time zones of the speakers. When we had sessions of individual pre-recorded talks with common themes, a moderator had to be recruited by the chair. With some sessions we checked whether the timing was working out, especially if this was a panel with practitioners, but in general, we created the schedule based on topics, time zones, and allowed some breaks in the schedule for networking and downtime.

We then sent calendar invites to all participants with the session they were scheduled in. We used calendar invites to reduce the mistakes in time zones and get the event on the calendar with the right time slot. We also created a schedule for the website where people could check the time of the event in their time zone. Typically, the public schedule was available 2 to 3 weeks before the conference.

Video submissions: The videos were due about 10 days before each conference. We sent out a reminder a week before the due date to all those speakers who had not submitted their video. Since people need some time to watch videos before the live sessions where they discus the videos, we aimed to have all the conference material, including videos, being available 4 to 5 days before the conference.

Each submitted video had to be processed. After downloading we checked to make sure the audio was working, and sometimes we had to go back to the submitter when a video was submitted in a format we could not deal with, if the presentation was way past the 10-minute time limit or if there was inappropriate content for a scholarly conference. We did not do any editing of the videos. We uploaded them to YouTube, as unlisted, not informing YouTube channel followers, and not allowing comments. The video links were then embedded in the conference website where participants could make comments, and the presenter was notified if a comment was made. On average a video received 4 to 5 comments during a conference.

A challenge is that most presenters will submit their video on the due date, sending files from a few Megabytes to a few Gigabytes. The person who is handling those videos will need high-speed internet to process them in a. timely manner. It is also not uncommon that individuals submit an improved version of the video, after you have already processed the previous version.

The video submission is also the time we started to get questions about registration. To submit their video, the participants had to be registered for the conference. Some participants were surprised the conference was not free (since it was online …). By going through this process, we also informed the participants how to log in to the conference website. When they registered for a conference, they received a key to enter the website, and no matter what we did, there were many people who couldn’t find the conference key.

We sent individual reminders the day after the due date to those who had not submitted their video, and who did not send a cancellation or a request for an extension of the deadline.

We found that about 10% of those with accepted abstracts did not submit their video. This had many different reasons. Often it was lack of time/ bad planning to create the video, but also getting COVID, or not being allowed to do the presentation by their sponsor.

Training for tech hosts: Since Arizona is in the Western part of the USA, most live events, accommodating participants, happened in the early hours in Arizona. Each live event happened in a Zoom room. The links to the Zoom rooms were available by a click on the conference program when people were logged into the conference website. Since each live session would be recorded, stored in the cloud, edited, uploaded to YouTube channel, and then added to the conference website (a few hours after the live session), we used the Zoom account we have via ASU. This meant that only people affiliated with ASU could be the host or co-host of a Zoom room during the conference.

Luckily, we had a number of dedicated students and staff who were willing to host live sessions during challenging time slots (like 3 am in the morning). We trained the “tech hosts” and practiced quite a bit before feeling confident for the first conference. Although most live events are technically uneventful, we wanted to avoid major problems if something happened. We asked moderators and panelists to come to the Zoom room 15 minutes before the start of the live session. Most of them did. We wanted to use these 15 minutes to make sure everything was working properly, panelists and moderators coordinate on the order of activities during the live event and coordinate how chat messages were dealt with.

Sometimes panelists did not show up, and the moderator had to improvise. A few times a panelist did not feel confident to speak English and expected the moderator to translate, sometimes a moderator didn’t show up until the last minute which made those sessions less organized, and sometimes a panelist had bandwidth problems and it had to be discussed how to handle it before going live.

The tech-host had a list of whom to expect as panelists and moderators (although sometimes people have a Zoom name other than their actual name) and allowed them into the room to prepare for the session. At the starting time of the live session, the tech hosts muted themselves and turned off their camera, and allowed people in the waiting room to enter the Zoom room. Automatically attendees were muted when they entered the room. At this time the tech host also disabled the waiting room so that attendees could come straight in.

Typically, the moderator waits for a minute to start. This allows people to connect to the Zoom room. Attendees may come from a previous session, or have a calendar notification for the start time of the event and need a minute to log in. We advised the moderators to invite attendees of a live session to share their cameras if they felt comfortable to allow for a more informal atmosphere. The custom was that people mute themselves if they do not speak, and sometimes the tech-host had to mute somebody if this did not happen and caused undesirable background noises. The tech hosts had coordinated with the moderators on how to be warned about the end time of the session getting near.

When the moderator closes the session, the tech host stops the recording. Sometimes there is some informal chat after the session, but often the tech host stops the session. The recording of the live session is automatically started when the tech host starts the session 15 minutes before the start of the live session. The preparation discussion is edited out before the recording is posted. We recommend automatic recording since it is not unimageable to forget to start recording manually.

We created a tutorial for the tech-hosts, and you find the version from October 2021 here.

Communication with conference participants: In the weeks before the conference, we started communicating with moderators and panelists of the live sessions. We learned that it is important to have everyone being involved, not just the moderator. We explained the goal of the live sessions, what the roles are of the moderator and the panelists. We emphasized that a good session requires people to come prepared, watch videos related to the session in advance, and prepare some discussion points in advance. It was strongly discouraged to repeat the pre-recorded presentations during the live sessions, but it still happened a few times, and those sessions were not well received. A moderator was expected to engage with the panelists in the days before the session, prepare some discussion points, and some broader aims for the session.

Once the program was known we sent this information to all people registered. We did the same when the conference website was ready. Once the conference website was ready, we sent one daily email to provide a short message and remind people about some aspects of the conference (such as how to sign up to the Slack channel, or how to give comments on pre-recorded videos). When the conference started, daily emails gave a brief preview of the program of that day. Since many registrants would use those emails to participate in the conference, and not go back to previous emails, we included their conference key in those daily emails.

Now that we have done 10 conferences and learned what worked well, we suggest having better information about the nature of the live sessions in the call for abstracts. When we planned the conferences, we had not expected almost everyone to assume by default that they would participate in a live session, and many assumed their video would be shown and they would be available for questions. This seems to be an approach in many other virtual conferences. Having to prepare for the session by watching other videos was not understood by everyone. But many did and this led to very lively debates among the panelists and with the rest of the participants in the sessions.

Website: The conferences websites were only accessible to those who had registered. The website for each conference contained the schedule, with a ‘check the time in your time zone’ link and a one-click option to put the event on one’s agenda. The pre-recorded talks were available on the website, and participants could comment on those videos. We also included a short video tutorial to introduce the various parts of the website. Via the website one could also go to the wonder.me virtual room to hang out with other participants or add songs to the Spotify playlist.

One question we received in many conferences is whether videos would be made public. Since conferences are closed events where people have not provided explicit consent to make the information public, the recordings remain only accessible via the conference website. Since some of the participants, especially practitioners, shared confidential information, it was important for us to keep the recordings available for registered participants only. Now that the conferences are over the recordings are available for all IASC members, who are required to log in first (and non-members could access the material till the end of 2021 for a small fee).

Social media: If time allowed it, we used social media – Twitter and Facebook – to promote upcoming sessions, and reminders 30 minutes before a live session started. Posters were created with basic information about the sessions and those were typically used for social media. We also shared those posters with the moderators and session organizers, so that they could distribute them and promote the live events too.

Lessons learned

Here is a list of lessons learned. In general, we were pleased with how the events went, and the feedback has largely been very positive, but we realize that virtual conferences will evolve in the coming years. It is especially challenging to engage participants in a virtual conference since people do not block their agenda for a virtual conference like one does for a conference in person.

Zoom stores statistics on who and when participated in the Zoom meetings. This allowed us to check how many people attended a live session for at least 5 minutes. On average 27 people participated per session. The attendance varied from 7 to 72 among the 180 live sessions. We know that recordings of live sessions were often seen later, especially since time zone differences made not all sessions accessible live to all participants.

Nevertheless, it is remarkable that among conference participants the majority only attend 1 or 2 sessions, typically the sessions where they functioned as panelists or moderators. Luckily there was a substantial group who attended a large number of live sessions, but those numbers underline the challenge that people like to give a talk about their own work, but are less likely to listen to the contributions of others. Therefore, it was important that within each session participants focused on discussions among different contributions.

Make it easy for people to put the live events on their calendars. Since virtual conferences happen during times people will do other activities too, participants will be selective which sessions to attend, and therefore it is helpful if people can put the events they like to attend on their agenda.

There is a variety of internet connectivity among the participants, so try to make use of low-tech solutions. Yes, it would be amazing to have an immersive 3D experience for networking, but we used the basic wonder.me room for people to mingle due to its simplicity.

We had conference chairs who worked with a steering committee on the content of the conferences. Since we were new to organizing these kinds of conferences, communication was not always perfect. Since chairs may not understand the logistical constraints, they may not always provide the information on time, or respond timely and correctly to inquiries from participants. Now that we have more experience, we recommend providing a clear set of expectations when which actions need to be taken by the chairs (dissemination, call for abstracts, review of submissions, feedback on schedule, communicating with moderators, etc.).

Have some redundancy in the team and program. It is no surprise, but surprises happen. Internet connection at the tech-host’s location fails, a computer crashes, a family tragedy does not allow a tech-host to participate or Zoom introduces a new feature that causes technical problems. So, have back-up people available to help.

We were not located in the same location due to the pandemic and operated from our homes in different places in the USA. We had a clear schedule that listed for each session who was the tech host, the Zoom link, and who was expected to be panelists and moderators. Caren Burgermeister was the contact person for any emergencies, and she was called in the early morning hours to cope with a technical problems tech-host experienced.

With good preparation the virtual conferences are rewarding especially seeing participation from scholars and practitioners who normally would not attend an international conference.

I recently saw the Dutch documentary Why we cycle which provides an integrated perspective of the role of cycling in Dutch society. Not only allow cycling people to go from A to B in a densely populated country like the Netherlands, it also provides other benefits such as cognitive and physical exercise, and stimulate the random encounters between people of different parts of society reinforcing the egalitarian societal landscape. People meet and get in a conversation waiting for a stop-sign to get green.

Obviously the biophysical context, a flat country, enables the use of bikes (but note that it rains every other day, and Dutch will continue to use the bicyle, even if it snows). The infrastructure for bicycles is excellent in the Netherlands, while the dense occupation makes it impossible for people in cities to use cars in a convenient way. As such the use of bicycles is also a cost-effective solution to solve mobility problems. And as the Prime Minister and the King also use the bicycle, there is a strong social norm to use bicycle for short to middle distance trips.

An important lesson is to vary between focused and unfocused attention. Your brain keeps working on problems when you are not concentrated on it. It is important to start early with studying and make use of this background brain processing. Spending many hours in the last minute before an exam is very ineffective. And practice. Do your homework and practice. You don’t derive skills in sports or music by just trying it once, you have to practice!!

To get a brief overview of her book, see her Tedtalk.

On July 19th a bridge collapsed on the I-10 that connects Phoenix with Los Angeles. Heavy rain caused flash flooding which eroded the eastbound bridge to give way. As a consequence the I-10, the main highway between Arizona and California was closed for a week and travelers had to drive a few 100 miles extra to go to their destination.

Is the bridge collapse a rare accident or can we expect more due to increased intensity of rainfall events and lack of maintenance of bridges (and infrastructure in general)?

This bridge collapse coincided with me reading the book “Too Big to Fall” by Barry LePatner, a construction lawyer of New York City. LePatner discusses a number of cases, such as the collapse of the I-35W bridge in Minneapolis, in detail and provides a historical analysis of the incentives to built and maintain infrastructure. Unfortunately, the incentives are tailored to building new roads and bridges (where the costs are shared with the federal government), and maintenance tend to be postponed. Furthermore, there has been a lack of coordination on how inspections need to be done. While there are now standard inspections, new technologies might be used to create smart infrastructure to get more often relevant info on key indicators of the structural functionality of the bridges.

His students created a website where you find animations, news updates and a game to get immersed even more with the rules and the environment. Although rules and regulations may sound like a boring topic. Steinberg is doing a great job to make it engaging and raise awareness.

I wonder how the developers deal with trolls, those gamers who purposely want to collapse the system. Anyway check out the Trailer and see whether this would be an interesting alternative to the robust worlds.

During the recent winter break I got introduced by some younger family members to Clash of Clans and Boom Beach, which are strategy games you can play on your ipad or other devices. You make investment decisions for defense and attack infrastructure as well as infrastructure to extract resources. For example, in Boom Beach (see figure below) you occupy an island and use gold and wood resources to build and support your army (to attack island of other players and rob their resources). There is a saw mill that generate construction material and it will not reduce the amount of forest on the island. In fact, if you have collected sufficient gold from the unlimited gold resource, you can increase the capacity of the saw mill which will not affect the amount of trees on the landscape.

It might be an interesting challenge for the gaming industry to try to capture relevant resource dynamics such that people learn not only to develop complex strategies to combat other armies but also derive a better understanding of the complex dynamics of short term benefits of resource extraction and long term consequences of a livable planet.

There is increasing concern over the repeatability and reproducibility of computational science (see also here, here, here, here and here). If computational scientific enterprises want to be accumulative more transparency is required including the archiving of computer code in public repositories. This also holds for agent-based modeling, an increasingly popular methodology in the social and life sciences.

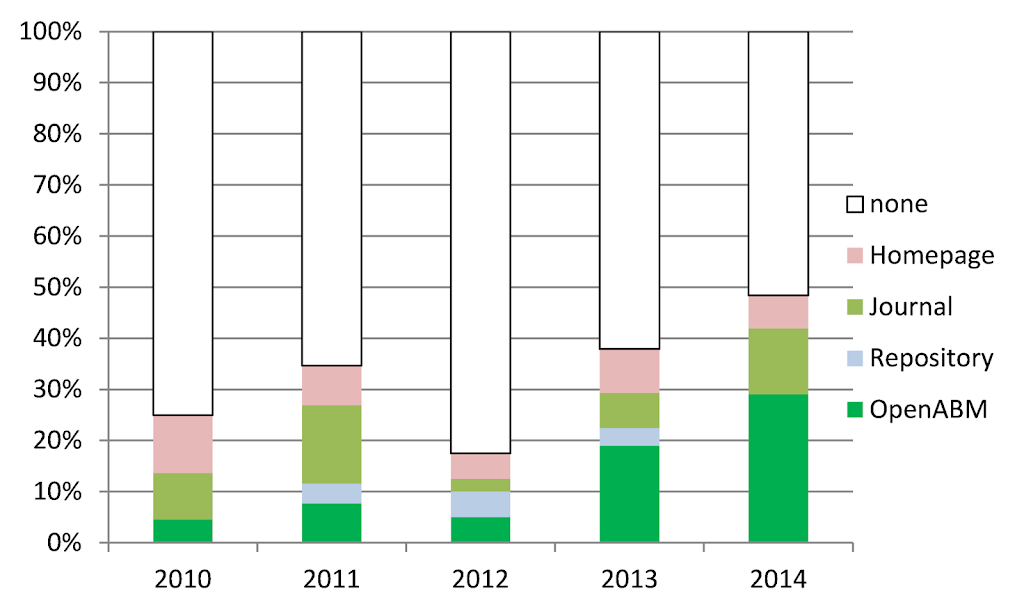

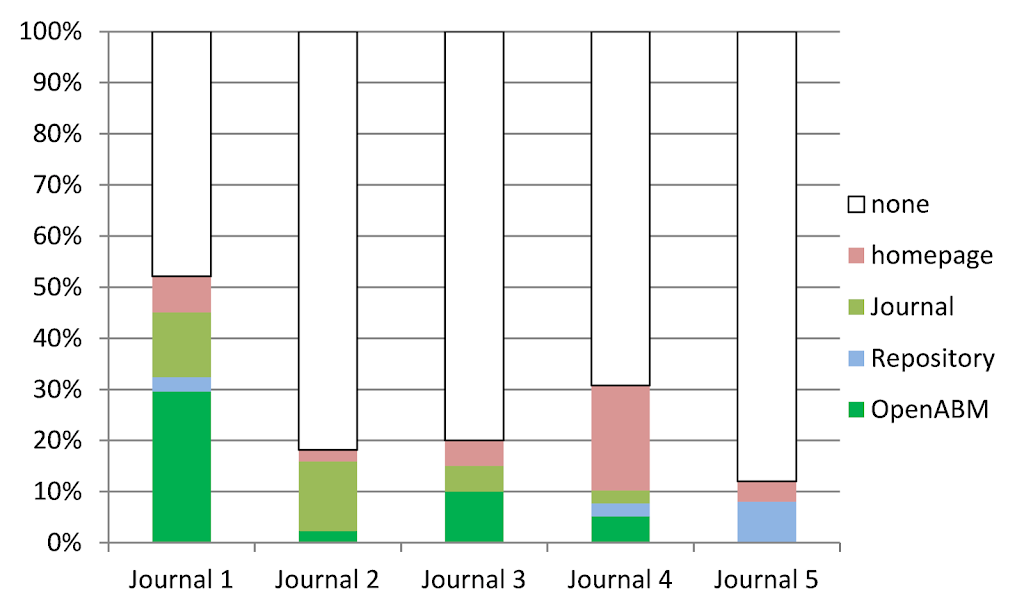

I show here some initial results of an analysis of the practice of archiving agent-based models. Five journals were selected that regularly publish research that use agent-based models: Advances in Complex Systems, Computational and Mathematical Organization Theory, Ecological Modelling, Environmental Modeling and Software, Journal of Artificial Societies and Social Simulation. Using the ISI web of science we searched for all articles in those 5 journals in the years 2010 to 2014 using the search term “agent-based model*”. This resulted in 255 articles on September 5, 2014 of which 56 articles were disregarded since they did not discuss an agent-based model itself.

Out of the 199 remaining articles 135 were found not to provide the computational model’s source code. 21 articles referred to an institutional or individual homepage. In 5 cases, the link resulted in a 404 not found error and we recorded that the code was not available. In 17 cases the code was included as an electronic appendix of the journal. Only 31 articles provided the model code in a public archive, out of which 26 were stored at the CoMSES Computational Model Library . The other 5 models were archived in repositories like Bitbucket, Git Hub, Google code, Sourceforge and the Netlogo community models site.

Over the years there has been improvement in model archiving. In 2010 75% of models were not archived. The increasing availability of public archives has enabled authors to archive their models more frequently and in 2014 50% of the models are archived. The majority of those models are archived in OpenABM. As we can see, most models are still not archived. One journal has championed model archiving with more than 50% of its publications associated with a publicly archived model, whereas the other journals have an archiving percentage between 10% and 20%.

Since most research is sponsored by tax money, sponsors sometimes explicitly require that the data, including software code, is made publicly available. We find that papers from the 2 main sponsors (16 by European Commission and 21 by the National Science Foundation) experience a low compliance rate to best practices. In both cases we find that only 15% of the models are available in public archives, significantly lower than the articles that do not list a sponsor (29%), or list other sponsors (24%).

Currently the scope of the analysis is extended to about 3000 articles (using search term agent-based model* unrestricted to years and journals). Besides getting a better picture of current archiving practices we also hope this activity lead to more awareness of the problem and the need for journals to increase requirements for archiving code and documentation in public repositories.

So citizens start solving science problems, but a harder problem is to have scientists doing research in the open, Open science, which enable others to build on it. The incentives structures in Science are perverse to stimulate discovery. In fact we use incentives structures from the 19th century ignoring the potential increase in knowledge production if we use a 21th century approach. This networked open science approach aspired by Nielsen experience major challenges due to the incentives of scientists to publish results in high profile journals, but not getting recognition for sharing data and/or computer code, which are often the main outcomes of a project.

As we experience in the development of openabm scientists like to download the models of others, but are reluctant to archive their own work. Furthermore, journals are reluctance to increase the standards of transparency and sponsors like the National Science Foundation require data management plans but do not invest sufficiently in cyberinfrastructure to make this possible.

Although there is self-organization at the small scale in the science community, the sponsors need to step up and invest seriously to make a science for the 21th century possible.

Mancur Olson introduced the concept of roving and stationary bandits to explain why dictators – stationary bandits – have a self-interest to the society they rule productive to maximize the rent they can collect from the population. This in contrast to roving bandits who have no obligation to provide protection to the population, or keep the land productive. Roving bandits just plunder and steal. A stationary bandit uses taxation.

With the development of nation states during the last few centuries we seem to have entered a period where we don’t have roving bandits. Some countries experience democratic systems, others autocratic. But in recent years we seem to observe the re-emergence of roving bandits, as a nasty side-effect in panacea thinking of the benefits of market solutions and democratic systems. Note that democracy is typically portrait as voting, not the involvement of people in decision making, which is the key to democracy according to Vincent Ostrom.

We have multinational companies who move around to avoid taxation and exploit natural resources. When resources are depleted they can move on to other countries. Whether this is shrimp farming in rice field in south east Asia, gold mines in Africa, or soy bean production in Latin America, the multi-national companies lack the incentives to care about the long term for the people, and often local governments are too weak to implement and enforce regulations to reduce the negative impact.

Former stationary bandits

At a different scale, we see roving bandits emerging in Northern Africa and the Middle East. The so-called Arab Spring led to the removal of some dictators who suppressed a large part of their population. Unfortunately we now learn that those dictators were able to provide some security compared to the anarchy currently ruling in ‘countries’ like Libya, Syria and Iraq. Those dictator were able to suppress the violence between different ethnic and religious groups within their countries. These suppressive regimes favored their own ethnic and religious groups, but compared to the current anarchy, we may wonder whether everyone did benefit.

Douglas North and his colleagues public a book in 2009 on the difficulty of societies to transition of controlling social order via violence, to the control social order via votes and democratic institutions. Those transitions have been rare, had a long duration and are embedded in a long history of social norm development that supports democratic institutions. As we have seen in recent years, removing dictators is not a solution to establish a less violent and prosperous society. I don’t have a solution to this problem, but we should at least learn from history and avoid creating more anarchy as has happened in recent years.